Lecture:

Wed 10:15-12:15PM, 60 Fifth Ave C15

Instructor:

Jinyang Li, Office hour: 1-2pm Mon, 60FA 410

Course Assistant:

David Pissarra, Office hour: 2-3pm Wed, 60FA 446

Course forum:

Course information

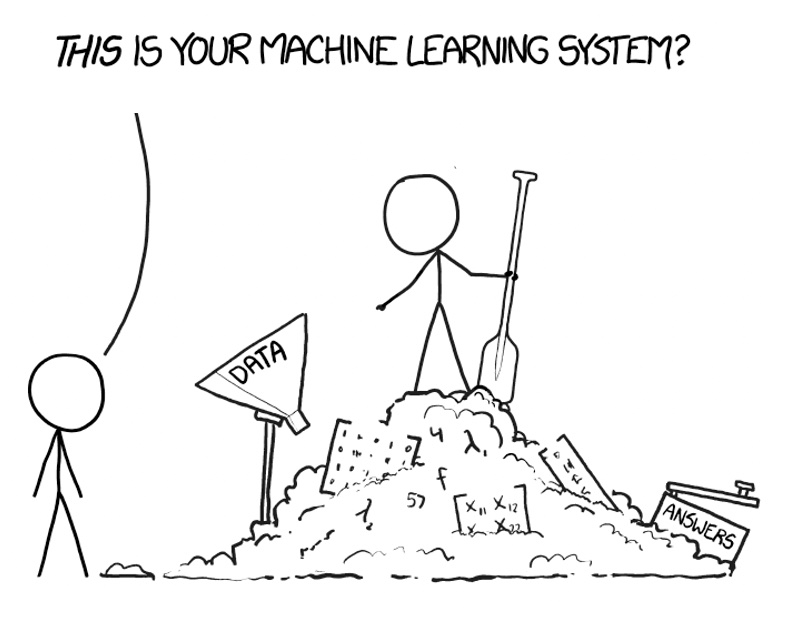

This class will discuss recent research on machine learning systems, esp. those targeted at accelerating deep learning workloads. We will take a deep dive exploring how these systems work so that ML models can be written in a high-level language and executed as low-level kernels on parallel hardware accelerators. Topics covered in this course include: basics of neural networks, how they are programmed and executed by today's deep learning frameworks, automatic differentiation, deep learning accelerators, distributed training techniques, computation graph optimizations, automated kernel generation etc.

Prerequisites:

- Comfortable with C/C++ Programming.

- Familiarity with the UNIX environment.

- Familiarity with ML or Deep Learning is a plus.

Academic Integrity

Please read our academic integrity policy carefully.